AIOps vs. MLOps vs. LLMOps

Finding the best fit for your AI strategy.

Hi 👋🏻, Naveen here — welcome to AI x Factor, where I explore the fast-evolving intersection of AI, leadership, and technology.

AI adoption is accelerating.

But managing AI models and systems is becoming more complex.

Three operational frameworks have emerged:

AIOps – AI for IT operations

MLOps – Operationalising machine learning (ML) and managing the ML lifecycle

LLMOps – Managing the lifecycle of LLMs and LLM-powered applications.

Each solves different challenges.

Each supports different business goals.

And they often overlap or complement one another.

In this post, I’ll break down what each of these “Ops” entails, highlight their benefits, and provide guidance to help organisations choose the right fit for their AI strategy.

What Is AIOps?

AIOps = AI for IT Operations.

It automates IT monitoring, incident response, and performance optimisation.

Think of AIOps as an intelligent IT assistant that analyses massive amounts of IT data (logs, metrics, events) in real time to detect anomalies, troubleshoot issues, and even take automated corrective actions .

In essence, AIOps platforms act as smart command centers that streamline everything from system monitoring to incident response and capacity optimisation.

Key Benefits:

Faster incident detection and resolution: the real-time analytics and anomaly detection to catch issues early and often automatically resolve them. This accelerates problem resolution and helps IT teams fix incidents before they escalate .

Reduced downtime: By predicting potential failures and proactively addressing them, AIOps minimises unplanned downtime. The system can correlate patterns across infrastructure to prevent outages, which lowers operational costs associated with downtime .

Improved system visibility: AIOps provides enhanced observability into complex IT environments. It consolidates data from various sources and uses AI to generate insights, giving teams a clearer picture of system performance and health. This improved visibility helps in capacity planning and informed decision-making.

Automation of routine tasks: Repetitive tasks like alert triage, log analysis, or restarting failed services can be handled automatically. By automating these workflows, AIOps frees up IT staff to focus on strategic projects instead of firefighting.

Best Fit For: Organisations that prioritise operational efficiency and IT resilience.

If maintaining large-scale infrastructure with high uptime is a key goal, AIOps is the ideal approach.

Enterprises with complex, distributed systems (e.g. large e-commerce platforms or global banks) benefit from AIOps to keep services running smoothly.

In short, any environment where real-time monitoring and rapid incident response are critical is a strong candidate for AIOps adoption.

What Is MLOps?

MLOps (Machine Learning Operations) is the practice of operationalising the ML model lifecycle – from development to deployment to monitoring – in a reliable and efficient manner.

It combines principles from DevOps, data engineering, and machine learning to streamline how ML models are developed, tested, deployed, and maintained in production .

In other words,

MLOps provides the end-to-end framework that ensures your data science experiments turn into robust, scalable AI solutions in the real world.

Key Benefits:

Streamlined ML lifecycle: MLOps standardises data prep, training, evaluation, deployment, and maintenance, helping models move from lab to production faster and with fewer errors.

Version control & reproducibility: By tracking data, code, and models, teams ensure experiments are reproducible and models can be rolled back if needed — key for compliance and debugging.

CI/CD & automation: Extends CI/CD practices to ML. Automated pipelines handle retraining, validation, and deployment with minimal manual effort, speeding up delivery of ML features.

Continuous monitoring: Tracks performance, accuracy, and data drift. Alerts trigger retraining or tuning, keeping models accurate and relevant over time.

Collaboration: Bridges data science and IT operations with standardised workflows and shared tooling, reducing friction and improving teamwork.

Best Fit For: Organisations that are data-driven and building predictive models at scale.

If you have a team of data scientists developing ML models (for example, for customer recommendations, fraud detection, or predictive maintenance), MLOps is essential to reliably get those models into production and keep them performing.

Companies like tech firms with personalisation algorithms, banks with credit scoring models, or manufacturers using ML for equipment maintenance all benefit from MLOps.

Essentially, any business looking to deploy and manage ML models as a core part of their operations will need MLOps to ensure those models are delivered efficiently and continue to perform well in production.

What Is LLMOps?

LLMOps (Large Language Model Operations) is a specialised offshoot of MLOps focused on the unique challenges of deploying and managing lifecycle of LLMs and LLM-powered applications.

Models like GPT-5, Anthropic Claude, or Meta’s LLaMA are massive in scale and resource-intensive, and they can exhibit unpredictable behaviours (like “hallucinating” incorrect facts or showing bias).

LLMOps provides the tools and practices to handle the entire lifecycle of LLM-powered applications, from fine-tuning and prompt design to deployment and monitoring of model outputs.

Key Benefits:

Efficient model handling: Optimises training and serving of large models with fine-tuning, compression, batching, and hardware efficiencies to keep costs manageable.

Prompt optimisation: Refines prompts with techniques like chaining and retrieval augmentation to produce more accurate outputs.

Monitoring & guardrails: Uses filters, bias detection, and feedback loops. Continuous checks (human or automated) ensure safe, reliable responses.

Governance & compliance: Tracks training, controls access, and enforces privacy and ethical standards. Builds trust through bias mitigation and transparency.

Best Fit For: Businesses that are adopting generative AI and conversational systems.

If you are building applications like intelligent chatbots, virtual customer service agents, generative content platforms, or code assistants, LLMOps is what will keep those systems running smoothly.

For instance,

An online retailer launching an AI chatbot would use LLMOps to fine-tune a language model on its customer service data, monitor the bot’s answers for customer queries, and continually improve responses based on feedback.

A software company offering an AI coding assistant (like GitHub Copilot, Cursor, Windsurf) relies on LLMOps to manage model updates and maintain code generation quality.

In short, any scenario involving LLMs in production – especially where accuracy, reliability, and safety are paramount – calls for LLMOps practices.

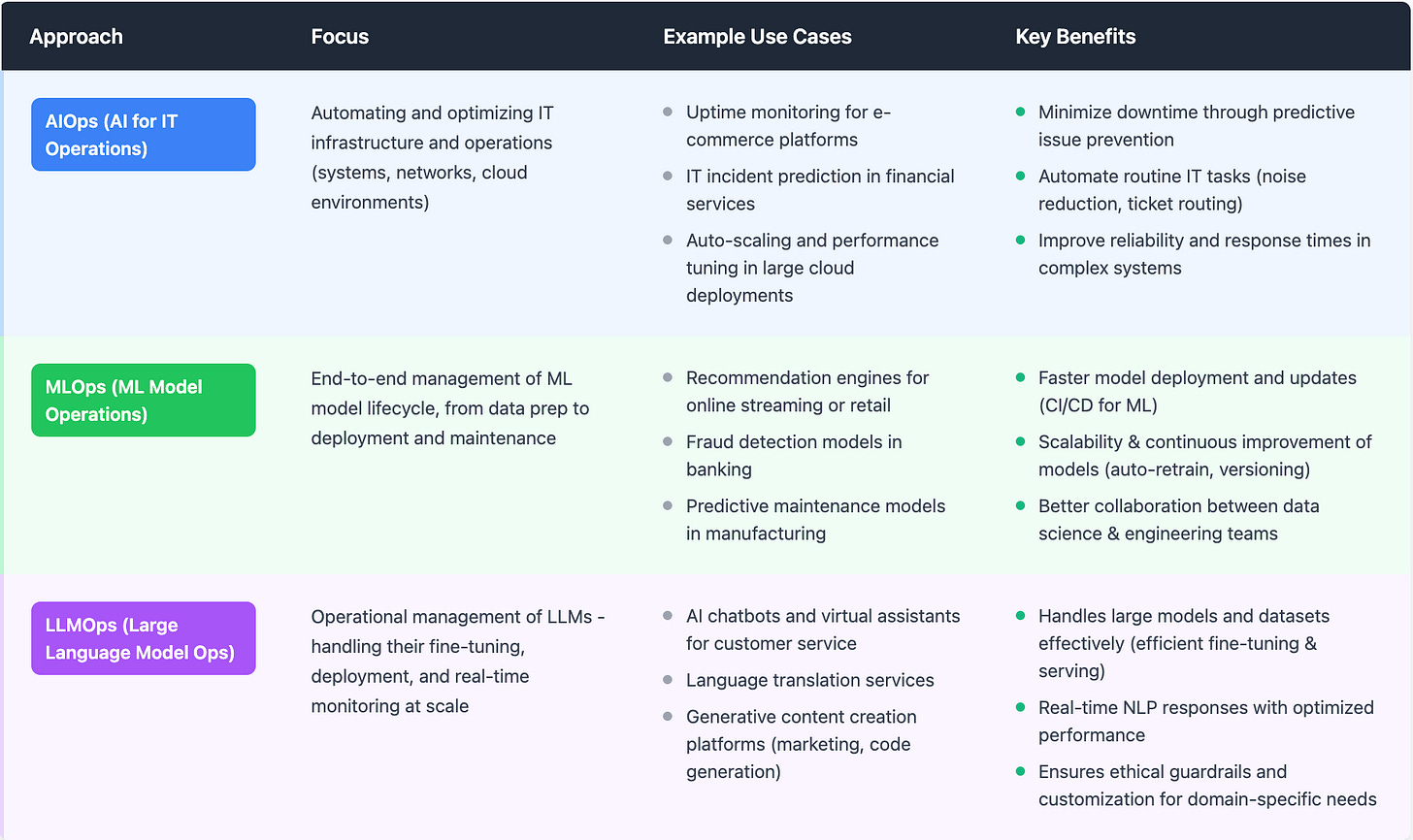

Comparing AIOps, MLOps, and LLMOps

All three “Ops” frameworks aim to make AI systems more reliable, scalable, and efficient, but they differ in focus and tackle distinct challenges. Here’s a breakdown of how they compare:

Purpose and Focus: AIOps targets operations, MLOps targets machine learning workflows, and LLMOps targets large language model applications.

Overlap and Shared Principles: Despite serving different domains, these practices share a lot of underlying principles. All three emphasise automation, continuous monitoring, and rapid iteration to improve system performance. There is also a trend of convergence – tools and techniques from one domain are being applied to others as AI matures.

We already see LLMOps as a specialised offshoot of MLOps, and AIOps platforms may incorporate outputs from ML models to inform decisions.

In many cases, an organisation’s AI Ops strategy might involve a combination of these domains working together.

Key Differences: The table below summarises some key differences and use case scenarios for AIOps vs MLOps vs LLMOps:

Table: A high-level comparison of AIOps, MLOps, and LLMOps in terms of their focus, use cases, and benefits. ©Naveen Bhati.

As shown above,

AIOps shines in scenarios requiring automation of IT systems and quick incident resolution.

MLOps excels in managing the complexity of deploying many ML models and keeping them current.

LLMOps is indispensable when dealing with the newest generation of AI – LLMs – where issues of scale, reliability and AI safety come to the forefront.

Choosing the Right Fit for Your AI Strategy

Selecting between AIOps, MLOps, and LLMOps (or determining the right combination of them) should come down to your business goals and technical priorities rather than hype.

Here are some key considerations and a simple decision framework:

Business objectives: Match your AI goals to the right ops. AIOps boosts IT reliability, MLOps powers predictive models, and LLMOps enables generative AI. Most organisations will need a mix.

Team expertise: Use AIOps if you have strong IT ops, MLOps if you have data science and engineering talent, and LLMOps if you’re ready to invest in NLP, prompt engineering, and ethics expertise.

Infrastructure & tools: AIOps builds on existing IT monitoring, MLOps requires pipelines and versioning tools, while LLMOps needs heavy compute, cloud services, and frameworks like LangChain.

Regulatory & compliance: AIOps helps with secure IT, MLOps tracks models and data for auditability, and LLMOps adds fairness and bias monitoring — essential for regulated, customer-facing AI.

In summary, match the “Ops” to your needs:

If IT uptime and resilience are the pressing issues, start with AIOps.

If your value from AI comes via predictive models and analytics, focus on MLOps.

If you’re investing in generative AI and LLM-based innovations, develop LLMOps capabilities.

In many cases, these are not either/or choices – mature organisations may incorporate elements of all three.

The key is to prioritise based on business impact: tackle the areas that will deliver the most value or mitigate the biggest risks for your strategy.

Future of AI Operations

The lines between AIOps, MLOps, and LLMOps will continue to blur.

Tools and practices are converging, and future platforms may unify IT monitoring, ML pipelines, and LLM management into a single “AI Ops” layer.

Governance, ethics, and compliance will become central. Expect stricter standards around bias, data privacy, and auditability. Ethical checks will shift from “nice-to-have” to mandatory in AI pipelines.

Automation will deepen.

Just as AIOps automates fixes, ML and LLM pipelines will increasingly self-tune, retrain, and optimise.

In short: AI will manage AI.

The future is convergence, governance, and automation — businesses that adopt early will stay ahead.

Conclusion

AIOps, MLOps, and LLMOps each solve different problems but share one goal: keeping AI systems reliable, scalable, and safe.

The key is alignment with strategy — not chasing buzzwords.

AIOps ensures infrastructure resilience.

MLOps delivers robust predictive models.

LLMOps enables responsible & reliable generative AI.

Often, the strongest approach is a mix. The right operational backbone helps organisations innovate while ensuring governance, security, and compliance.

👉 Start with business goals.

👉 Adopt the “Ops” that supports them.

👉 Build AI that is powerful, responsible, and future-ready.

Ready to level up your Ops?

While others debate AI's future, smart companies are capturing value today. Factor specialises in identifying and implementing high-ROI AI opportunities that deliver results in weeks, not years.

What you get in our FREE Discovery Call:

✅ AI readiness assessment for your specific industry

✅ 3 immediate implementation opportunities

✅ ROI projections based on real client results

✅ Technology roadmap tailored to your business

Factor: Your AI Transformation Partner

Your opinion matters!

Until next time,

Naveen & AI x Factor Team